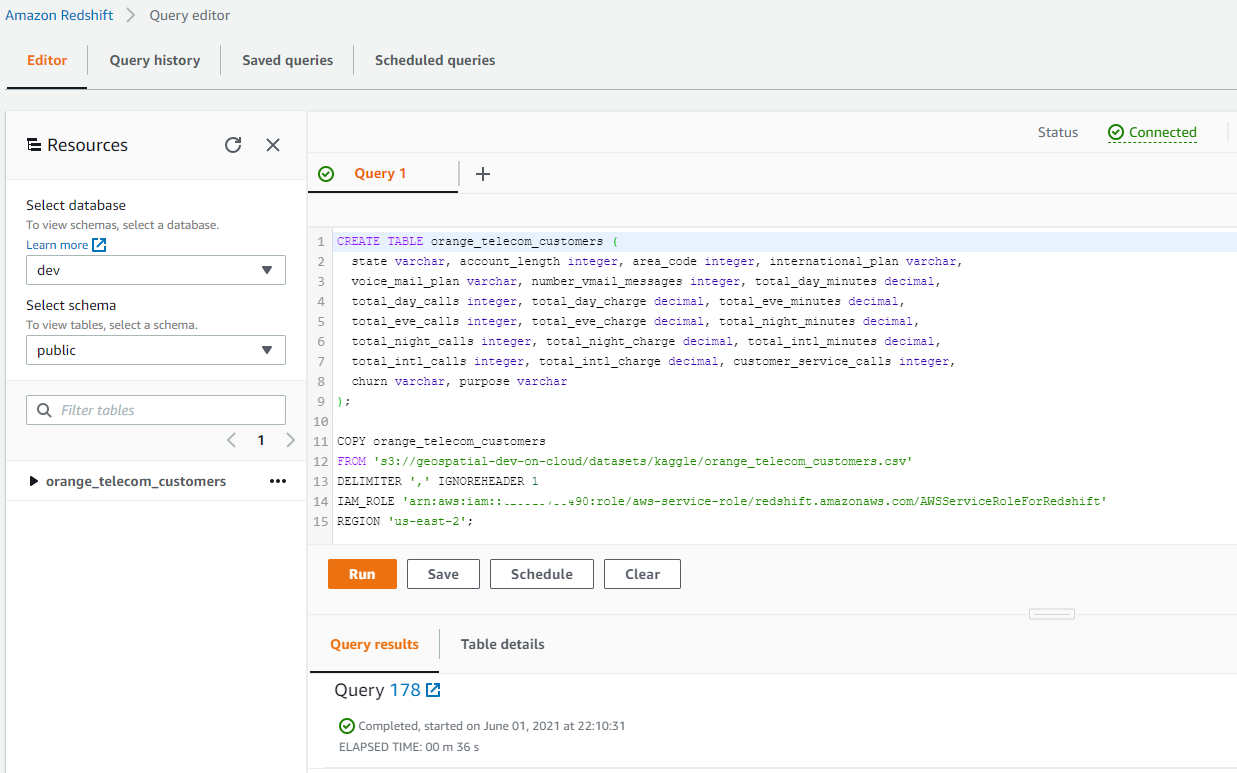

Create the offline feature group in SageMaker Feature Store and ingest data into the feature group.The workflow contains the following steps: The following diagram illustrates solution architecture. For an introduction to SageMaker Feature Store and instructions on setting it up, see Getting started with Amazon SageMaker Feature Store. The offline store uses an Amazon Simple Storage Service (Amazon S3) bucket for storage and can also fetch data using Amazon Athena queries. We also need an offline feature store to store features in feature groups. For an introduction to Redshift ML and instructions on setting it up, see Create, train, and deploy machine learning models in Amazon Redshift using SQL with Amazon Redshift ML. To get started, we need an Amazon Redshift Serverless data warehouse with the Redshift ML feature enabled and an Amazon SageMaker Studio environment with access to SageMaker Feature Store. To build this, we need to engineer features that describe an individual credit card’s spending pattern, such as the number of transactions or the average transaction amount, and also information about the merchant, the cardholder, the device used to make the payment, and any other data that may be relevant to detecting fraud. Overview of solutionįor this post, we create an ML model to predict if a transaction is fraudulent or not, given the transaction record. We also show you how to use familiar SQL statements to create and train ML models by combining shared features from the centralized store with local features and use these models to make in-database predictions on new data for use cases such as fraud risk scoring. In a local feature store, you can store sensitive data that can’t be shared across the organization for regulatory and compliance reasons. In this post, we discuss the combined feature store pattern, which allows teams to maintain their own local feature stores using a local Redshift table while still being able to access shared features from the centralized feature store. As a data scientist building an ML model, you may have access to the identifying information but not the transaction information, and having access to a feature store solves this. For example, an ML model to identify fraudulent financial transactions needs access to both identifying (device type, browser) and transaction (amount, credit or debit, and so on) related features. However, one challenge in training a production-ready ML model using SageMaker Feature Store is access to a diverse set of features that aren’t always owned and maintained by the team that is building the model. Additionally, you can import existing SageMaker models into Amazon Redshift for in-database inference or remotely invoke a SageMaker endpoint.Īmazon SageMaker Feature Store is a fully managed, purpose-built repository to store, share, and manage features for ML models. Redshift ML currently supports ML algorithms such as XGBoost, multilayer perceptron (MLP), KMEANS, and Linear Learner. We covered in a previous post how you can use data in Amazon Redshift to train models in Amazon SageMaker, a fully managed ML service, and then make predictions within your Redshift data warehouse.

Amazon Redshift ML makes it easy for SQL users to create, train, and deploy ML models using SQL commands familiar to many roles such as executives, business analysts, and data analysts. Data analysts and database developers want to use this data to train machine learning (ML) models, which can then be used to generate insights on new data for use cases such as forecasting revenue, predicting customer churn, and detecting anomalies. In addition, we believe this new feature will enable us to operate a high-performant data analytics platform at an affordable cost, saving 20% more than other analytics vendors.Amazon Redshift is a fast, petabyte-scale, cloud data warehouse that tens of thousands of customers rely on to power their analytics workloads. With the introduction of smaller RPU configuration in Redshift Serverless, we no longer need to worry about infrastructure tuning or security risks and can accommodate many small analytics workloads.

We already heavily use AWS services and Amazon Redshift Serverless is perfect for us to consolidate the entire analytics workload onto one platform. For these small analytics workloads we often used services from other vendors that required us to introduce security concerns in transferring data. Our analytics workloads are often small since we are running multiple different data exploration and experimental workloads in our growing business as a startup company. “Schoo encourages people to keep learning new things for a lifetime by offering live video streaming services and online community.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed